When Vector RAG Goes Blind: The $14B Note 12 Miss in Apple’s 2023 10‑K

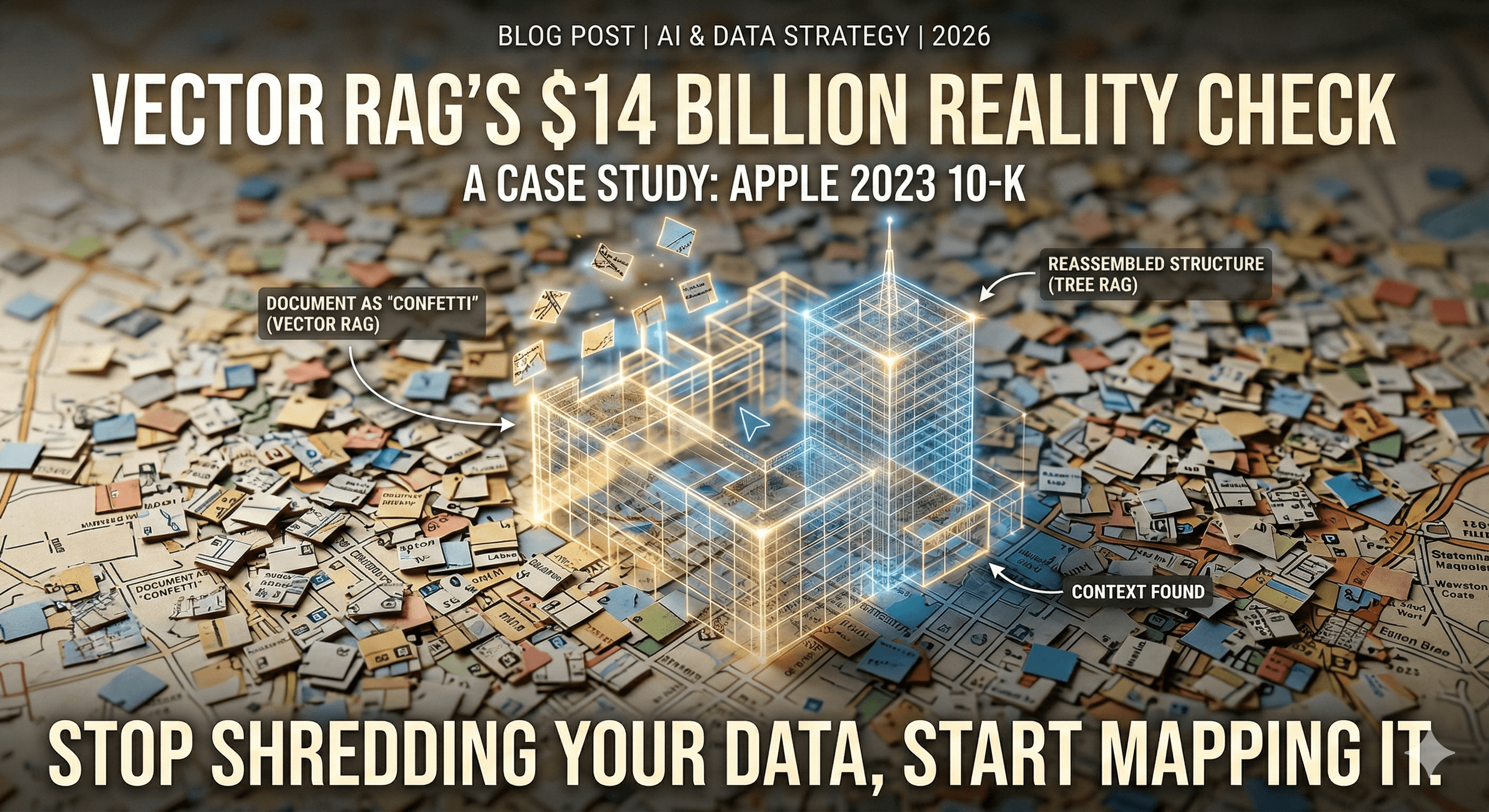

In the AI world of 2026, we have been sold a specific dream: Shred your PDF, turn the chunks into numbers (vectors), and let math find the answer. This is Vector RAG, and for simple FAQ bots, it works.

But we put this "standard" approach to a stress test using the Apple 2023 10-K. The result was a $14 billion reality check.

1. The Experiment: A "Trap" for Vector Math

We asked a two-part question that any financial auditor would care about:

"What was the Provision for income taxes in 2023, and what specific legal settlement is mentioned in the footnotes?"

The Contestants

Vector RAG (Pinecone): The industry standard. Shreds the document into 1,000-character "confetti" chunks and searches by similarity.

Tree RAG (PageIndex): The challenger. It maps the document's structure (hierarchy) and "navigates" it like a human librarian.

2. The Result: Why the "Scent" Went Cold

The results were night and day.

Target Info | Vector RAG (Pinecone) | Tree RAG (PageIndex) |

Tax Provision ($16.7B) | ✅ Found instantly. | ✅ Found. |

Legal Settlement (€13.1B) | ❌ Failed. (Claimed none existed) | ✅ Success. Found the Irish Tax dispute. |

Why Vector RAG Failed (Technical Post-Mortem)

Vector search works by Semantic Similarity (Vibes). When you ask about "Taxes" and "Settlements," the embedding model creates a single mathematical vector.

The Dilution Effect: Because "Tax" appears 500 times in a 10-K, the vector "pulled" toward the main financial tables on page 31.

The Keyword Gap: The legal dispute regarding Ireland (Note 7) uses words like "State Aid," "Escrow," and "Annulment." It rarely uses the word "Settlement" because Apple was still fighting it.

The Result: Pinecone looked for "Settlements," found a different footnote about employee stock options, and gave the LLM the wrong context. The AI, having no better info, told us: "No legal settlement mentioned."

3. The "Vectorless" Secret: Structural Reasoning

"Vectorless" or Tree RAG succeeded because it didn't look for the words; it looked at the Map.

Instead of a flat list of chunks, PageIndex built a Hierarchical Tree. When the query hit, the AI "Navigator" performed a Structural Hop:

Analyze: "Legal matters in a 10-K live in Item 3 or the Financial Notes."

Navigate: It went to the Tree Node for Note 7: Income Taxes and Note 12: Contingencies.

Extract: It read the entire section. It found the €13.1 billion Irish State Aid case. It didn't matter that the word "settlement" wasn't a perfect match; the AI reasoned that this was the "Legal Matter" the user was looking for.

4. Is "Vectorless" Just Marketing Hype?

There is a lot of noise about "Vectorless RAG" being the "Pinecone Killer." Let's be human for a second: it is not. It is a specialized tool.

Vector RAG (The Motorcycle): Use this for Scale. If you have 1,000,000 small files and need an answer in 50ms, stay with Pinecone. It is cheap, fast, and great for discovery.

Tree RAG (The Crane): Use this for Depth. If you have one massive, 200 page document where facts are "buried" in footnotes (legal, medical, or financial), you need a Tree. It is slower and costs more in LLM tokens, but it won't lie to you.

5. The 2026 Pro Move: Hybrid RAG

The question of whether "Vectorless" is the future hit me because it forces us to admit that similarity is not the same as relevance. In a production-grade system, you don't choose. You use a Hybrid Pipeline:

Search: Use Pinecone to find the 3 most relevant PDFs in your library.

Reason: Use a Tree Index to "deep dive" into those specific PDFs to find the hidden truth.

The Final Verdict

If you treat every document like a flat list of strings, you are leaving 50% of the intelligence on the table. Stop shredding your data and start mapping it.