Explaining Precision, Recall & F1 Without the Confusion

I used to spent few time tracing back these formulas every time someone mentioned them in a paper. This is the post I wish I had on day one.

The Four Things That Can Happen

Forget formulas for a second. Every prediction your model makes falls into exactly one of four buckets. Using a stroke detection model as our example:

| Model says: Sick | Model says: Healthy | |

|---|---|---|

| Actually Sick | ✅ True Positive (TP) — caught it | ❌ False Negative (FN) — silent killer |

| Actually Healthy | ⚠️ False Positive (FP) — false alarm | ✅ True Negative (TN) — correct dismissal |

The trick to decode any term instantly:

"True/False" = was the model right or wrong?

"Positive/Negative" = what did the model predict?

So False Negative → model said Negative → but it was False (a lie) → patient was actually sick.

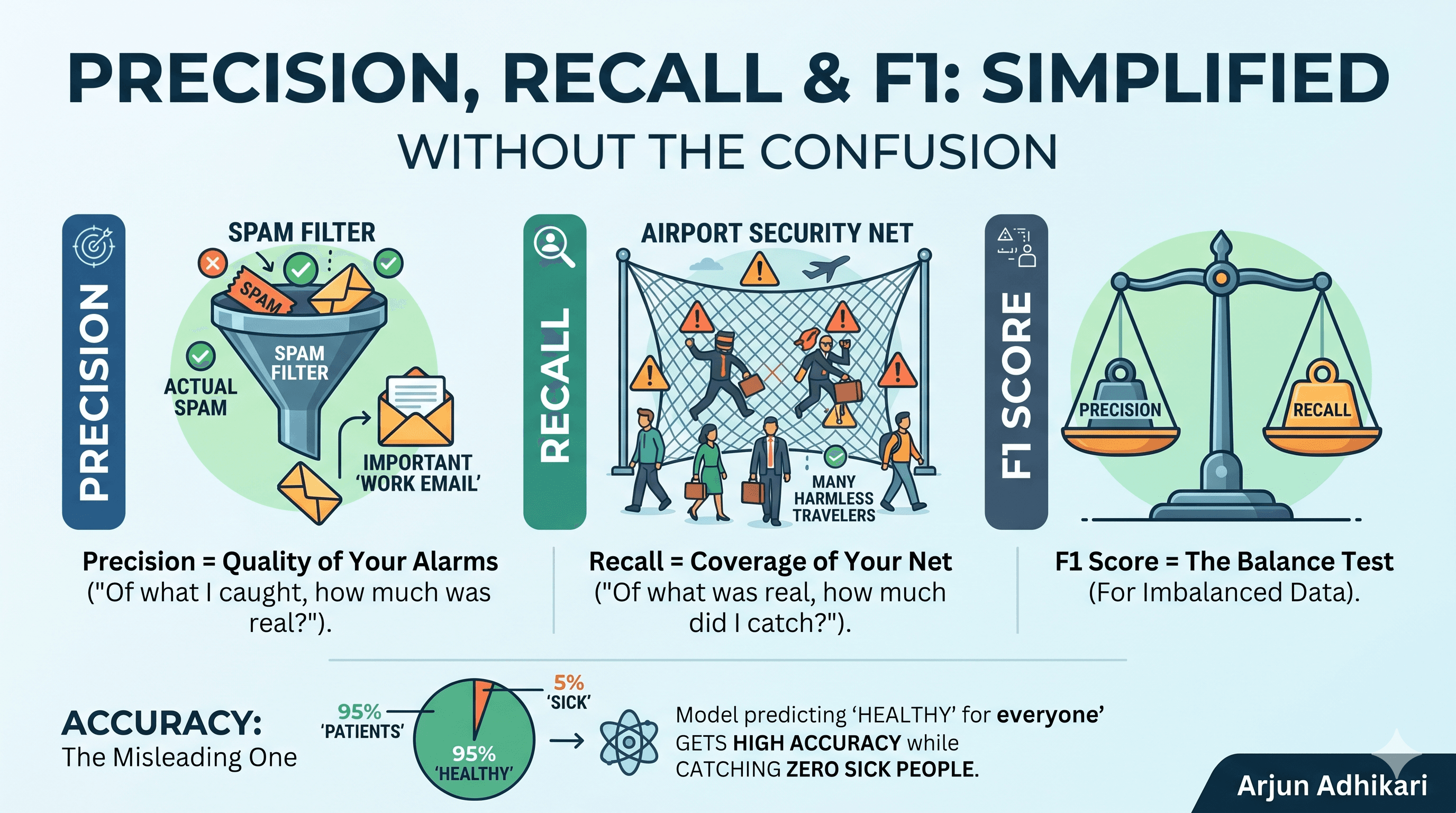

Precision — Quality of Your Alarms

$$Precision = \frac{TP}{TP + FP}$$

The question it answers: When the model shouts "sick!", how often is there actually a sick person?

Think of it as a spam filter. You want almost everything in your spam folder to actually be spam. You'd rather let a few spam emails into your inbox (misses) than accidentally delete an important email (false alarm). That's high precision.

Recall — Coverage of Your Net

$$Recall = \frac{TP}{TP + FN}$$

The question it answers: Out of everyone who was actually sick, how many did the model catch?

Think of it as airport security. You flag everyone who might carry something dangerous. You'll inconvenience hundreds of innocent people (false alarms, low precision) — but you cannot miss a single real threat. That's high recall.

Recall is also the cheat-detector for imbalanced data. A model that always predicts "healthy" on a 95/5 dataset gets 95% accuracy — but its recall is 0%. It caught exactly zero sick people.

F1 Score — The Balance Test

$$F1 = \frac{2 \times Precision \times Recall}{Precision + Recall}$$

If either precision or recall is 0, F1 = 0. No hiding. This is why F1 is the go-to metric for imbalanced datasets — it punishes models that game one metric at the expense of the other.

Accuracy — The Misleading One

$$Accuracy = \frac{TP + TN}{Total}$$

The trap: on a dataset with 95% healthy patients, a model that always says "healthy" gets 95% accuracy while catching zero sick people. Never use accuracy alone on imbalanced data.

🎮 Play With It Live

The best way to truly understand these metrics is to break them yourself.

👉 Open the Interactive Confusion Matrix

Try this: drag the FN slider to 80 and watch recall collapse while accuracy stays high. That's exactly how a dangerous model hides behind a good-looking number.

When to Care About Which Metric

The domain tells you what to optimize. There are two failure modes — and they pull in opposite directions.

High Precision, Low Recall — "The Cautious Expert" Only raises the alarm when near-certain. Misses some cases, but rarely cries wolf. → Use when false alarms are expensive: spam filters, criminal sentencing, loan approvals.

High Recall, Low Precision — "The Paranoid Net" Catches everything. Lots of false alarms, but nothing slips through. → Use when misses are dangerous: cancer screening, fraud detection, stroke detection.

The question to always ask: "Which mistake is more costly — a false alarm, or a miss?"

The Cheat Sheet

Bookmark this for the next time someone shares model numbers in a paper or meeting:

| What you see | What it actually means |

|---|---|

| High accuracy, low recall | Lazy model hiding behind imbalanced data |

| High precision, low recall | Very cautious, missing many real cases |

| High recall, low precision | Catches everything, too many false alarms |

| High F1 | Actually balanced and genuinely working |

| F1 = 0 | Either precision or recall is zero — model is broken |

One Line to Remember

Precision = "Of what I caught, how much was real?" Recall = "Of what was real, how much did I catch?"

That's it. You'll never need to trace back the formula again.

Built an ML model recently? Drop your precision/recall numbers in the comments — let's talk about what they actually mean for your use case.